Community Tip - Learn all about PTC Community Badges. Engage with PTC and see how many you can earn! X

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

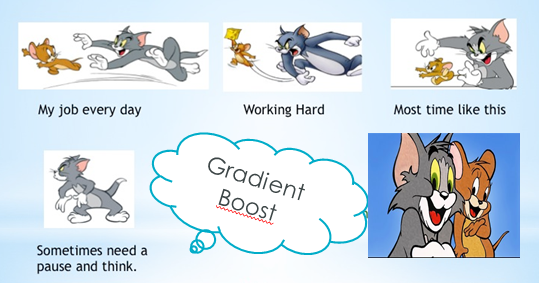

Why use Gradient Boost and how does it work?

In this Blog, we will share some light about Gradient boost, which is a default algorithm in our Analytics platform.

We will touch on:

1) The main purpose of Gradient boost and how the technique works.

2) We will look at advantages and constraint.

3) Last some “nice to know” tips when working with Gradient.

Gradient boost is a machine learning technique which main purpose is to help weak prediction models become stronger.

Gradient boost works by building one tree at a time, and correct errors made by previously tree. The theory support reweights of edges which allows badly weight edges to get reweighted.

For example the misclassified gain weight and those weights which are classified correctly, lose weight. It is kind of the same strategy when dealing with stocks; you balance the investment between bonds and share. An analog could also be done to illnesses; If a doctor informs that you have a rare disease, you want to make sure to get a few more opinions from other doctors, You will evaluate all the information to make a more correct decision about how to cure yourself.

Why use gradient boost:

- Gradient boost provides the user with a powerful tool to boost/improve weak prediction models.

- Gradient boost works well with regression and classification problems, therefore Decision tree can benefit from applying gradient boost.

- Gradient boost is known in the industry, to be one of the best techniques to use when dealing with model improvement.

- Gradient boost uses stagewise fashion, in this way each time it adjust a tree, it does not go back and readjust when dealing with the next tree.

As with all machine learning algorithms gradient boost also have some constraint:

- There is a change of overfitting.

“Nice to know” tips:

- A natural way to reduce this risk of overfitting would be to monitor and adjust the iterations.

- The depth of the tree might have an influence on the prediction error, observe what happens if the depth is a stump/1 level deep.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi soumya-hcl

Gradient boost, works well with regression and classification problems. ;o)

Best,

abarki