Community Tip - New to the community? Learn how to post a question and get help from PTC and industry experts! X

- Community

- ThingWorx

- ThingWorx Developers

- ThingworxAnalytics

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

ThingworxAnalytics

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

ThingworxAnalytics

Hi Friends,

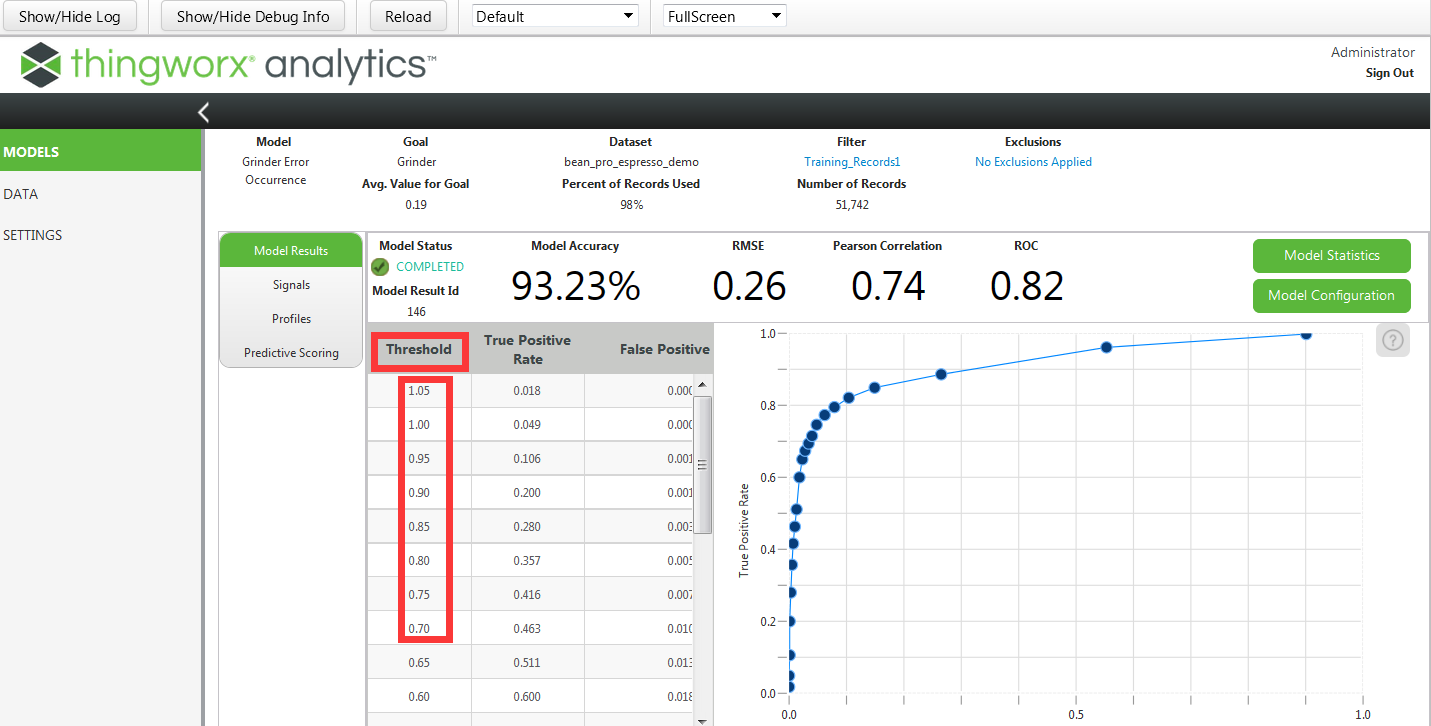

I have some problem about some parameters in this pictureA ,what these means ? and what this paraments mean in pictureB and why should I fill these.

- Labels:

-

Analytics

-

Troubleshooting

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi Joe

For picture A, the table and the ROC curve goes together and described the accuracy of the model.

You can maybe have a look at this blog for some input: Metrics for Model evaluation used in ThingWorx Analytics

For picture B, you have got a good explanation if you select the online help in Builder on that page. (pick on the question mark icon)

Basically if you select some important feature the scoring result will be appended with the number of important feature you selected.

Those important features are the features that influence most the prediction of the goal.

So this give you some added insight on what is impacting the prediction, but it does no change the prediction.

The additional Features is used if you want to show the value of some specific features in the output scoring result. This again does not change the prediction, it just add some information inside the scoring result file.

hope this helps

Christophe

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi Joe

Regarding the question about the ROC curve (picture A), in addition to the link I sent earlier, I would recommend you check the video at http://www.dataschool.io/roc-curves-and-auc-explained/ .

You should get an understanding of what the threshold means.

Note that this is not something you can control in ThingWorx Analytics but it does give information on the performance of the model.

The Area Under the ROC Curve (AUC) is what appears as ROC in Builder UI on the right hand side of RMSE and Pearson.

Kind regards

Christophe

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi Christophe

Thanks for your patiance,I am trying to undestand this.

Best regards

Joe

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Joe,

Any update on this? Was Christophe Morfin's post helpful? If so, could you click on the "correct answer" or "mark as helpful" button and let us know?